We use unmanned airplanes to map extensive territories as well as multirotor drones for precision 3D model in construction, etc. We prepare an appropriate sensor, optimum flight plan and locate photogrammetric points.

The flight provides the following outcomes: orthophotomap, point cloud, 3D mesh model, digital model of the surface and other options. The data provided are georeferenced and in standard formats for further processing in GIS applications.

The online interface allows the inspection results to be available from anywhere without the necessity to download the full data package. The browser is capable of connecting existing map layers and measurements of distances and areas including GPS localization.

Our company carries out photogrammetric imaging followed by a digital reconstruction of the spatial model using an unmanned aerial vehicle. The process may cover both individual objects as well as large landscape entities. The resulting model is used to accurately measure distance, areas, volumes as well as to carry out more complex geographic analyses and cartographic calculations, to prepare orthophotomaps, visualizations etc. The entire flight is planned and performed automatically. It is a very fast and operative method of measurement, suitable even for areas with difficult access where other surveying methods may not be feasible.

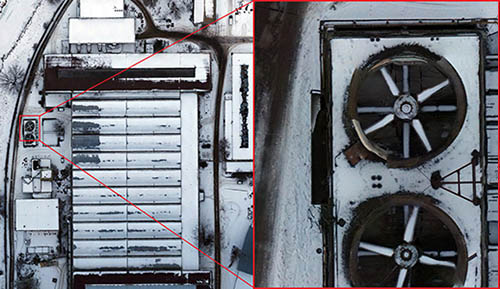

The resolution of imaging is 0.5 cm/pixel. We possess a wide spectrum of sensors not only for the visual spectrum but the map may also contain, for instance, the thermographic layer. The outcomes are provided in various formats and systems of coordinates. In addition, they are available at the client online browser allowing instant access at any place.

Orthophoto or orthomosaic is the most sought output of aerial photogrammetry combining map properties (system of coordinates and scale, possibility to measure) and aerial photographs (comprehensibility and integrity). Orthorectification occurs during processing, i.e. the elimination of the geometric distortion of image caused by varying heights of the terrain - each point on the photo then appears as if viewed from a bird's eye perspective - in practice, the view of the terrain is not obstructed by e.g. a building facade (so called "trueOrtho"). These properties allow to combine an orthophotomap with other maps or to use it directly as a layer in GIS applications.

An orthophotomap allows interactive viewing, measurement of points, distances and areas and a change of scale by using buttons.

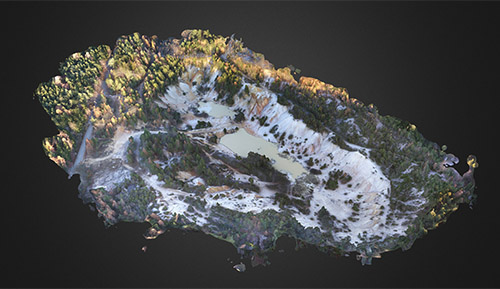

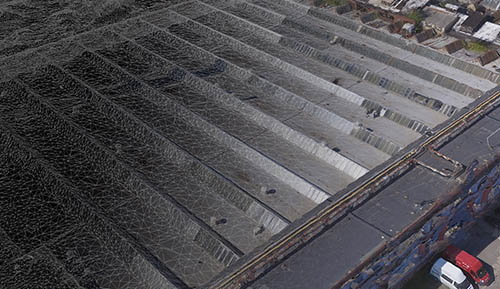

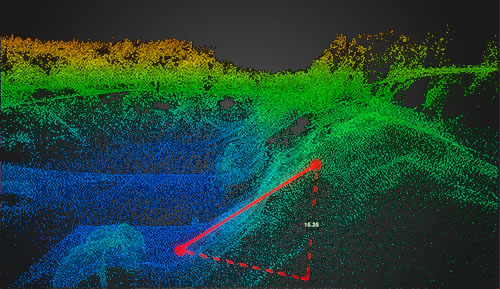

We are able to process three different types of 3D representations of a mapped area from photogrammetric images. Point clouds, digital terrain models (DTM, DEM), surface models (DSM) and other options are a combination of topographical and hypsometric data. They are used for an accurate analysis, for modeling and simulation (such as flood and avalanche areas, signal and light propagation in a terrain) or as supporting documentation for building design and production of cartographic maps and plans. Other options are 3D models including surface texture - typically they serve directly for visualization and presentation of the area and buildings of interest, production of interactive scenes and animation, or as supporting documents for architectural and urban studies.

Interactive visualization of a 3D model.

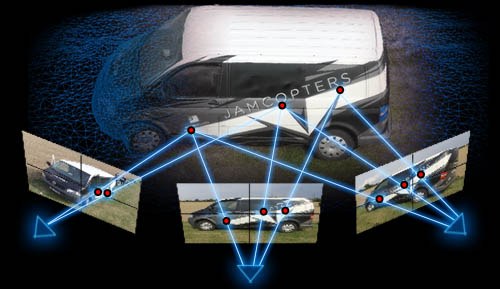

If the measured object is shown on at least two photos taken from different points of view, the 3D position of points in space can be reconstructed. To do this, we need to know the so called "elements of internal orientation" of images - they identify the internal geometric arrangement of the sensor and the lens and thus they determine the projection parameters. We also know "elements of external orientation" of an image, i.e. the position and orientation of a sensor in space at the moment of exposure. If we select a point on an object, visible on both photos, we can define lines going through this point and the point of photo taken and they respect parameters of projection. The intersection of the lines in space defines the 3D coordinates of the selected point.

The above described principle means that it is necessary to capture each point of an object on several photos taken from different points of view. When taking aerial photographs, this is achieved through a high rate of overlapping of individual images. The overlap value is usually 60-80% of the photo area. The drone flies automatically according to a flight schedule - the optimum flight route and the scanning interval to achieve the optimum overlapping rate is calculated after entering the area, the sensor, the flight height and other parameters. These parameters allow to approximately determine the spatial resolution of the resulting model. Coordinates of each photo are recorded during the flight with GNSS (also the sensor orientation as an option) for the subsequent calculation.

Reconstruction of the model is based on the above basic principle. However, in fact, it is a complex computing task. Hundreds or even thousands of photographs are taken during flights and thousands of key points are automatically identified in each of them. All photographs with a key point in them are found and triangulation is carried out using the above mentioned inner and outer orientation elements of the photographs. The calculation becomes more complicated at this point: the outer orientation elements, i.e. positions and tilt of the sensor in space result in a relatively inaccurate measuring. Similarly, the inner orientation elements, i.e. geometrical parameters of the sensor and optics, are known but they are also subject to errors due to manufacturing inaccuracies and optical distortion of the lens (such errors are compensated by calibration of a particular sensor). Therefore, the algorithm considers such values to be only approximate. Then, the calculation is done interactively and all parameters gradually optimized and the total amount of deviations minimized.

A successful calculation results in a "point cloud", i.e. a set of points of the photographed object in a three-dimensional space. Such points represent the object's surface geometry and we can already conduct precision measuring at this phase. The cloud is also used as an intermediate step for other outputs. For example, we can make a Digital Surface Model (DSM), by interpolation of the area between the points, or a Digital Terrain Model (DTM), by filtering out the surface structures, which are used for further cartographical measuring and processing. Similarly, we can make a 3D mesh model which is covered by the photographic texture and which is primarily suitable for visualization. Another product is an orthophotomap. It is made by back-projection of photographs onto a Digital Surface Model with known altimetry, by doing which we eliminate the geometrical deviations of photographs which resulted from different distances or heights of the terrain. Therefore, such a map may be used for precision measuring.